"We need a language structure to communicate in human-AI collaboration."

About

This project introduces a web tool that helps instructors and a Large Language Model (LLM) collaboratively build a structured representation that connects teaching goals to gameplay decisions through four linked language components. By making pedagogical intent explicit, editable, and traceable throughout the design process, the system aims to reduce barriers for non-expert game designers while preserving human control over critical educational choices. The work contributes both a conceptual framework and an interface design that shows how language can serve as a transparent bridge between pedagogy and play in AI-supported educational game design.

The Language Framework

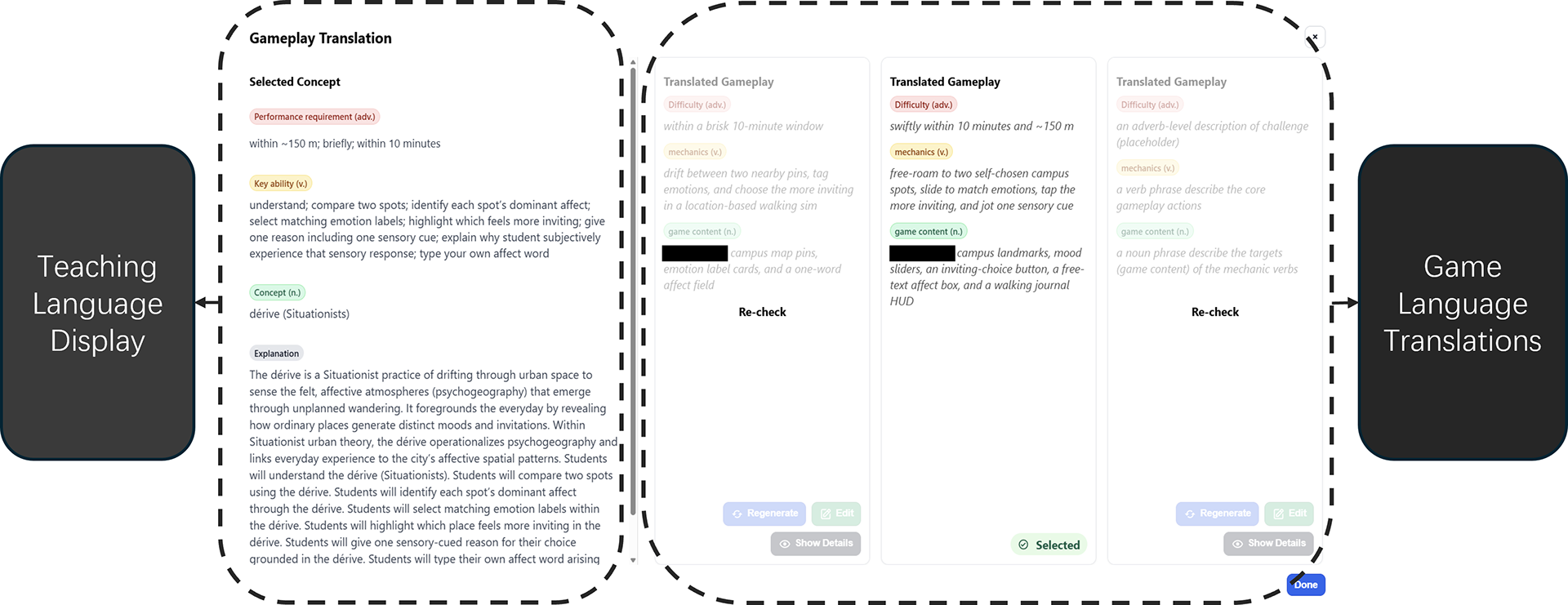

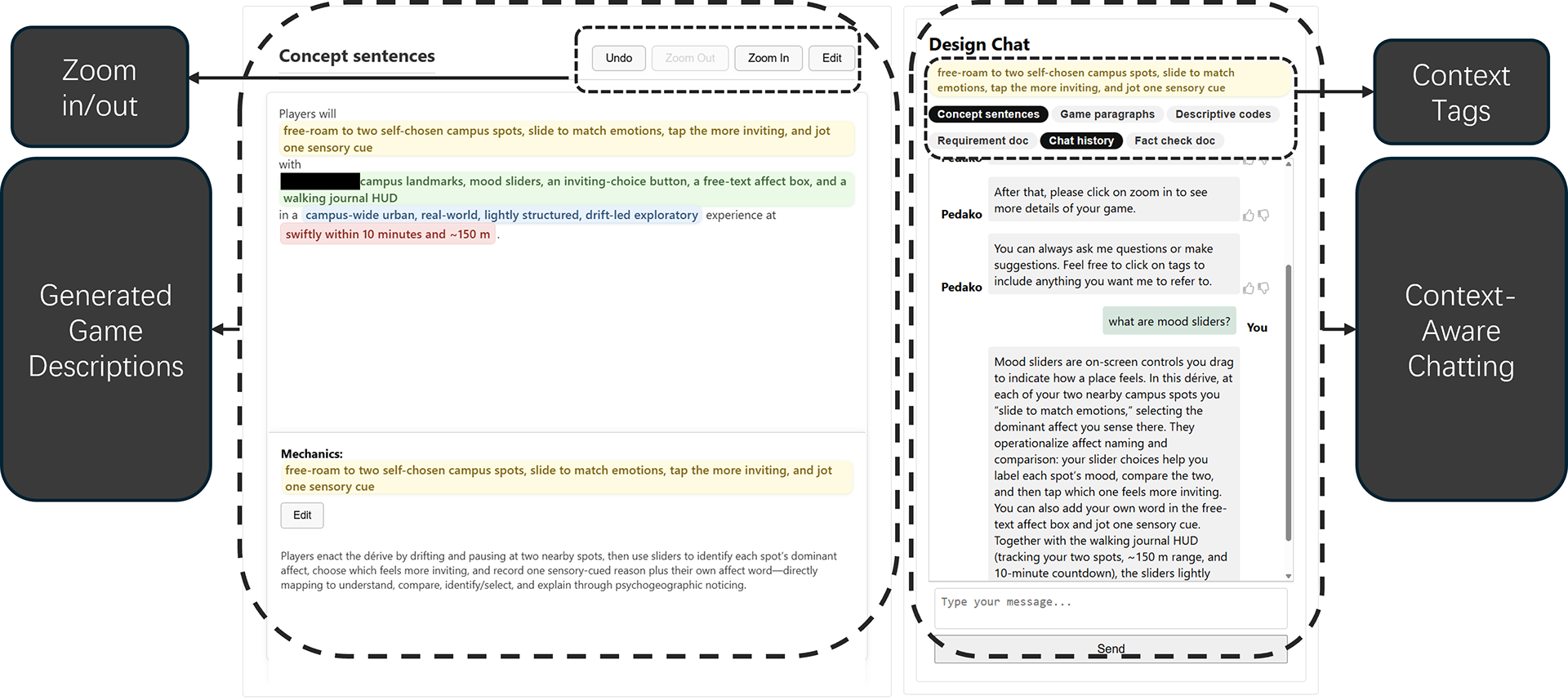

The language-mapping framework represents educational intent as a single controlled sentence: “Players (Learners) [Adverbs] [Verbs] [Nouns] in a [Adjectives] environment.” In this structure, verbs define the target learning action, nouns define the focal concept or content, adverbs specify how performance should occur, and adjectives define the tone, realism, or contextual framing of the learning environment. The system uses these four elements to build a parallel game-side representation with the same structure, so each pedagogical choice has a visible counterpart in gameplay, such as mechanics, content representations, challenge conditions, and aesthetic framing. This makes the mapping a shared design artifact that both the instructor and the AI can inspect, revise, and develop together during co-creation.

We need this framework because educational game design is difficult for many instructors, even when programming barriers are lowered. Open-ended prompting and many current AI tools can generate plausible ideas, but they often make it hard to see how those ideas connect to learning goals, why certain design choices were made, or how to revise them without starting over. In educational contexts, this problem matters because instructors remain responsible for pedagogical fit, content correctness, and student accessibility. The framework addresses this by keeping pedagogical intent explicit, editable, and traceable throughout the design process. Instead of asking instructors to reverse engineer AI output, it gives them a structured way to communicate intent, evaluate translations from pedagogy to gameplay, and maintain control over critical educational decisions.

The System

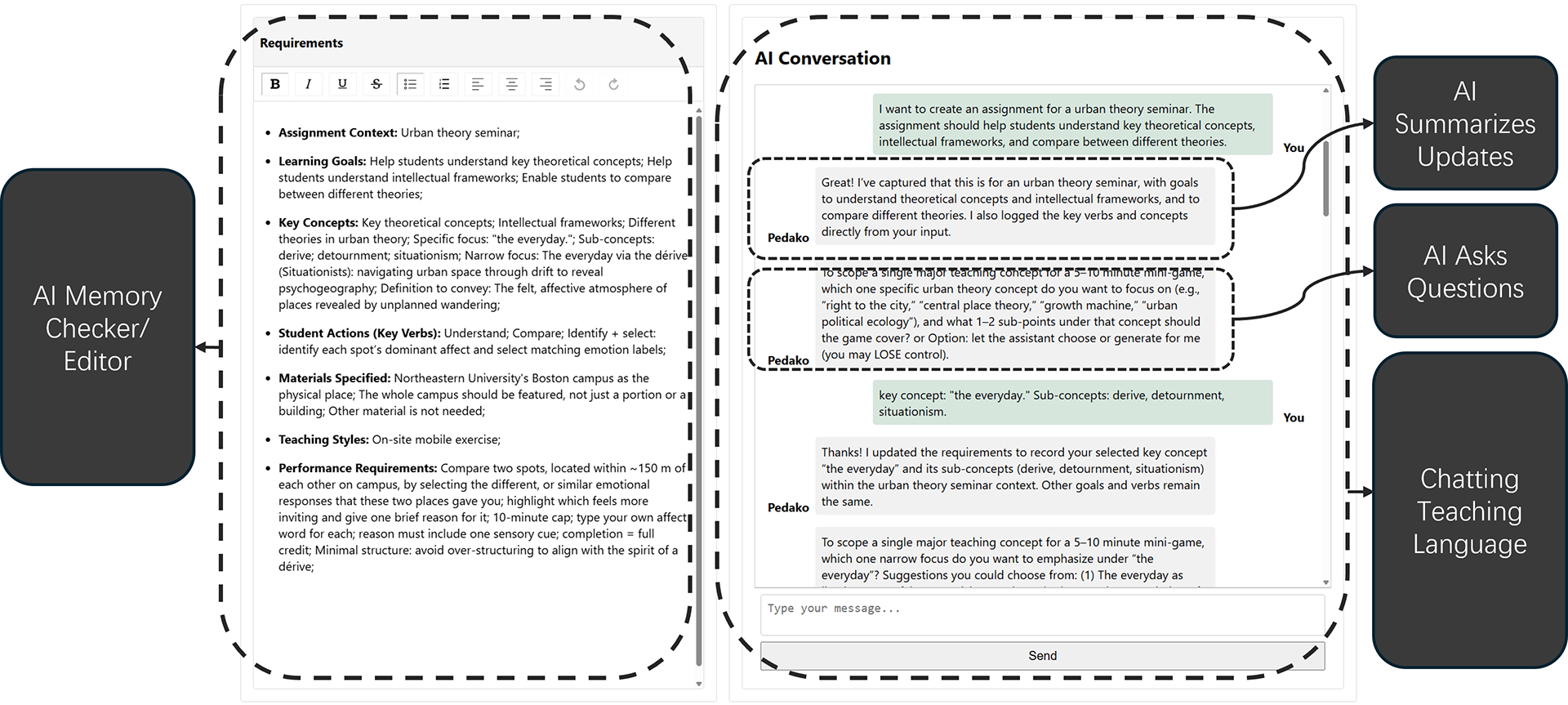

The system is designed as an LLM-based web application that guides instructors through three connected phases: requirement extraction, language translation, and language development. First, the AI asks structured questions to help instructors define the four elements of the language framework. Next, it translates that pedagogical description into multiple candidate game concepts while showing how each element maps from teaching language to gameplay language. Finally, instructors can refine the selected concept with AI support, expand it into descriptive gameplay explanations, and generate pseudocode that can guide later implementation. Across all phases, the interface keeps the mapping visible and editable so instructors can compare options, revise decisions, and maintain control over the design process.

How useful it is?

Based on the preliminary user study with 16 higher-education instructors, the framework was useful because it helped participants turn vague teaching ideas into concrete game-design directions without requiring them to think like game designers from the start. Instructors used the framework to clarify what they wanted students to learn, compare AI-generated alternatives more systematically, and reflect on whether a game actually served their pedagogical goals. It also supported trust by making the AI’s reasoning more legible and supported progress by breaking the design task into manageable decisions, although some instructors also noted that structured options could narrow exploration if accepted too quickly.